1 April 2026

What Running an AI-First Fintech Actually Looks Like in 2026

by Nicholas Holden

We started building Banqora in 2024 (we are now 2 years old!), but the idea had been brewing for a long time - the state of AI back then was that you would be lucky to get anything useful out of an interaction. I remember watching someone generate a Pong clone in a browser from a single prompt and thinking it was the start of a new era - and seeing someone convert a chunk of code from one language to another and almost not believing it. However, hallucination was a major problem, bugs were very frequent and it felt like it might be stuck as a parlour trick: entertaining, maybe useful for prototyping, probably not something you'd build a company on.

We bet on it anyway, and every few months since the tooling has taken another leap - we have adopted each one aggressively. The gap between that first buggy Pong game and what we run today is enormous. Multiple years of compounding improvements, applied immediately each time, has turned what looked like a toy into core infrastructure.

The result is a company that produces the output of a much larger organisation. We ship fast, maintain institutional-grade standards for capital markets, and spend our engineering time on the problems that actually matter. Here's how that works in practice, and where we have learned that AI still has hard limits.

How We Actually Operate

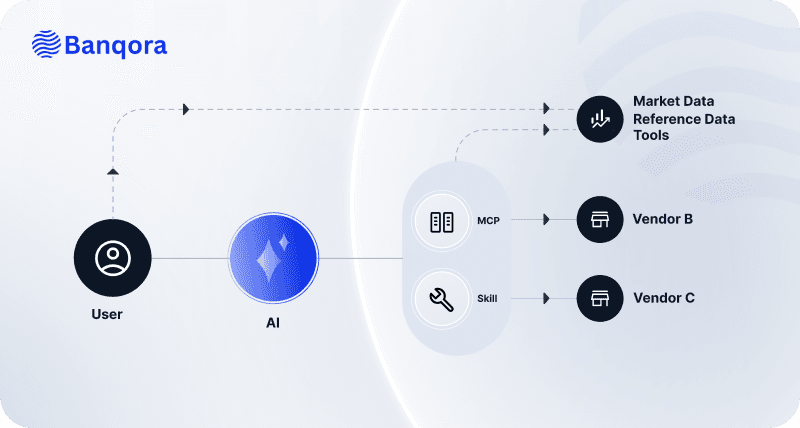

A principle runs through everything we build: every AI agent gets the minimum set of tools it needs for its job, and access to nothing else. In regulated financial services, "an AI that can do anything" is a liability. An AI scoped to a specific task with specific individual permissions is an asset.

In development, our engineers spend their time on architecture, code review, and judgement calls. AI agents draft code from tickets, generate test coverage, and push branches for review. When a pull request lands, an Agent runs integration tests against our live development environment: it reads the PR diff, understands the change, hits the relevant API endpoints, spins up Playwright for UI testing, and comments the results back on the PR. That agent has access to the test environment and the PR context. It has no access to production systems, customer data, or deployment controls. Engineers review the output and steer the direction, making adjustments and refinements where necessary.

Security works the same way. When a CVE is flagged in our dependencies, our pipeline automatically audits the vulnerability, bumps the affected packages, and runs the full test suite. If the upgrade breaks something an Agent picks up the failing tests, reads the error output, and fixes the application code to work with the new versions. As an ISO audited company this is a huge efficiency improvement and solves one of the long standing issues I have had when maintaining platforms. Every built container image is scanned for vulnerabilities before it reaches any environment and then monitored when live. A failed scan blocks the deployment. These gates are enforced by the pipeline, not by individual judgement.

Beyond the CI/CD pipeline, we run virtual employees - persistent AI agents with their own personas, responsibilities, and tool access - hardened for security first and partitioned by sub-role, with automated AI and human approval processes. Each has its own identity, its own credential scope managed through short lived tokens and network isolation. They're effective because they're scoped - each persona has exactly the tools and permissions its role requires, nothing more.

From the CEO down, everyone at Banqora works directly with LLMs near constantly. Prompting architecture, system instructions, agentic workflows - these are core competencies across the business, on par with reading a spreadsheet or writing an email. When your entire operation is built on this tooling, fluency is mandatory.

Where AI Falls Short

We benefit from being honest about this, consensus seems to swing from AI is going to solve all problems to it can't solve anything well, as normal the truth lies somewhere in between. I think we are in a unique position to give some real feedback about this, seeing the trajectory over 2+ years on a daily basis and not being limited in what we can experiment with - anything that gives potential efficiency or a business edge we will try. Understanding exactly where AI breaks down is what separates a company that's genuinely AI-first from one that's just using the buzzword.

Firstly and possibly most critically: AI is an extraordinary pattern-matcher and interpolator. It synthesises existing knowledge brilliantly - it can merge concepts, optimise existing processes, and generate variations on known solutions faster than any team. But it almost always interpolates - it recombines what already exists. Genuine innovation - the zero-to-one leap that creates a new product category, identifies an unserved market, or rethinks how post-trade settlement should work - requires extrapolation, and AI is measurably bad at it. The ARC-AGI-3 benchmark is an example of this in action; it's the first interactive reasoning benchmark designed to measure human-like learning in AI agents. It tests skill-acquisition efficiency: can an agent learn from experience, adapt its strategy, and plan over long horizons with sparse feedback? Every puzzle is solvable by humans. AI models fail badly. The benchmark's thesis is blunt: as long as a gap exists between AI and human learning, we are still short of AGI.

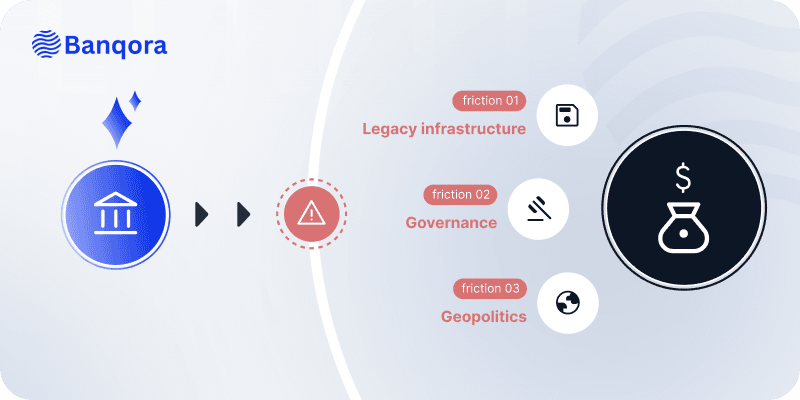

This matters doubly in capital markets, where deep domain expertise is the difference between building something useful and building something that looks useful. Understanding the nuances of regulatory frameworks, the mechanics of post-trade workflows, the unwritten rules of how institutional clients actually operate - this knowledge takes years to accumulate, isn't all written down and AI has no shortcut to it. AI can process documents and surface patterns, but it lacks the judgement to know which patterns matter. The real challenge - and the real value - is at the intersection of these two gaps. Innovation alone produces ideas that sound clever but miss the market. Domain expertise alone optimises what already exists. Building something genuinely new in capital markets requires both: the intuition to see what the industry needs before it asks for it, and the deep operational knowledge to make it actually work within the constraints of how institutional finance operates. That combination is rare, it's entirely human, and it's where our leadership earns its keep. AI accelerates everything around it, but it is never the source of direction.

The uncanny valley problem: anywhere Banqora's brand touches a human - whether that's a customer, a partner or the general public - a person is leading. Capital markets run on trust and people can tell something is off when it has come straight out of GPT - and it devalues the brand, the relationship and the message. AI can generate layouts and component libraries, but the decisions that build trust - visual hierarchy, brand consistency, the feeling that someone considered the experience - require human judgement.

In finance data and security are two of the most fundamental concerns. We need real prices, real positions, real regulatory data, and every system that touches it locked down. We engineer guardrails extensively but the core disciplines are simpler: quality data in, quality product out, and every agent, pipeline, and credential scoped to the minimum it needs. A misconfigured system in regulated finance is a compliance event. Context is the other constraint. Bigger windows don't solve the problem, they just make it more expensive. Feeding an LLM everything and hoping for the best gets you slower responses, higher costs, and quietly degrading accuracy. Curating what the model sees is real engineering work, and in finance a quietly wrong output is the most dangerous kind.

The Point

Startups have always had to run lean and the advent of AI has facilitated an acceleration of this efficiency. By offloading execution, infrastructure, and repetitive work to AI, we free up our people to do what humans do best: think originally, build relationships, design with intent, verify and make the hard calls that determine whether a company succeeds.